Updated 18 July 2019 – correction in the example where only compute is considered for vNUMAUpdated 20 Oct 2017 – ‘NEW’ with Memory ConsiderationsUsing virtualization, we have all enjoyed the flexibility to quickly create virtual machines with various virtual CPU (vCPU) configurations for a diverse set of workloads. But as we virtualize larger and more demanding workloads, like databases, on top of the latest generations of processors with up to 24 cores, special care must be taken in vCPU and vNUMA configuration to ensure performance is optimized.Much has been said and written about how to optimally configure the vCPU presentation within a virtual machine – Sockets x Cores per SocketSide bar: When you create a new virtual machine, the number of vCPUs assigned is divided by the Cores per Socket value (default = 1 unless you change the dropdown), to give you the calculated number of Sockets. If you are using PowerCLI, these properties are known as. In the example screenshot above, 20 vCPUs (NumCPUs) divided by 10 Cores per Socket (NumCoresPerSocket) results in 2 Sockets. Let’s refer to this virtual configuration as 2 Sockets x 10 Cores per Socket.HistoryThe ability to set this presentation was originally introduced in vSphere 4.1 to overcome operating system license limitations.

VNUMA (virtual non-uniform memory access) is a memory-access optimization method for VMware virtual machines that helps prevent memory-bandwidth bottlenecks. The vNUMA hypervisor creates a NUMA topology that closely mirrors the underlying physical server topology, allowing guest operating systems to intelligently access memory and processors.

As of vSphere 5, those configuration items now set the virtual NUMA (vNUMA) topology that is exposed to the guest operating system.NUMA is becoming increasingly more important to ensure workloads, like databases, allocate and consume memory within the same physical NUMA node that the vCPUs are scheduled. When a virtual machine is sized larger than a single physical NUMA node, a vNUMA topology is created and presented to the guest operating system. This virtual construct allows a workload within the virtual machine to benefit from physical NUMA, while continuing to support functions like vMotion.While the vSphere platform is extremely configurable, that flexibility can sometimes be our worst enemy in that it allows for many sub-optimal configurations.Back in 2013, about how Cores per Socket could affect performance based on how vNUMA was configured as a result. In that article, I suggested different options to ensure the vNUMA presentation for a virtual machine was correct and optimal.

The easiest way to achieve this was to leave Cores per Socket at the default of 1 which present the vCPU count as Sockets without configuring any virtual cores. Using this configuration, ESXi would automatically generate and present the optimal vNUMA topology to the virtual machine.However, this suggestion has a few shortcomings. Since the vCPUs are presented as Sockets alone, licensing models for Microsoft operating systems and applications were potentially limiting by the number of sockets.

About Mark AchtemichukMark Achtemichuk currently works as a Staff Engineer within VMware’s R&D Operations and Central Services Performance team, focusing on education, benchmarking, collaterals and performance architectures. He has also held various performance focused field, specialist and technical marketing positions within VMware over the last 7 years. Mark is recognized as an industry expert and holds a VMware Certified Design Expert (VCDX#50) certification, one of less than 250 worldwide. He has worked on engagements with Fortune 50 companies, served as technical editor for many books and publications and is a sought after speaker at numerous industry events. Mark is a blogger and has been recognized as a VMware vExpert from 2013 to 2016.

He is active on Twitter at @vmMarkA where he shares his knowledge of performance with the virtualization community. His experience and expertise from infrastructure to application helps customers ensure that performance is no longer a barrier, perceived or real, to virtualizing and operating an organization's software defined assets. TroyIf your vm’s were created the old way (vcpu’s set by socket count), is it worthwhile to go back and change them to the core-count method after upgrading to vSphere 6.5? IeVM’s currently having 4 sockets x 1 core/socket.

Should this be now changed to 1 socket x 4 cores/socket?Or should this new rule of thumb only be used when creating new vm’s going forward? If so, does that mean the template vm’s should be changed after upgrading to vSphere 6.5? Or would you need to rebuild your templates from scratch using the new rule-of-thumb?. Mark Achtemichuk Post authorThe configuration of the sockets and cores for the virtual machine becomes VERY important when you cross a pNUMA node.When the virtual machine is smaller than a pNUMA node, these will ‘probably’ be less impact since the virtual machine will be scheduled into 1 pNUMA node anyways.So I’d suggest an approach like:1) re-configuring all VM’s that are larger than a pNUMA node (priority)2) reconfigure templates so all new VM’s are created appropriately (priority)3) evaluate the effort of re-configuring everything else (good hygiene). Mark Achtemichuk Post authorAs Klaus said – if your app is NUMA aware (MS SQL being the best example) – you want vNUMA enabled with an optimal config.Now if your VM is smaller than a pNUMA node, then the benefit may or may not be measurable, since the VM will be scheduled into a 1 pNUMA node.

So you could continue to leverage hot add for small VM. I highly recommend not adding hot add into templates. That said, my experience is that hot add highlights a miss in operational processes. I might suggest evaluating a more comprehensive capacity and performance tool like vROPs and its right sizing reports. Mark Achtemichuk Post authorOne of the only reasons to really cross a pNUMA node with a VM would be that there isn’t enough memory on the pNUMA node to satisfy the configuration for the VM. Otherwise I suggest, as outlined above, to use the max pCore count of the pSocket first before incrementing the vSocket count.You suggest you have 2×16 hosts so I’d always use 1×1, 1×2, 1×31×15, 1×16, then 2×9, 2×10 etcSo no – I suggest you don’t ever use 12×1 or 16×1 as configurations.Hope that helps clarify.

YanHi Mark,I expect you would say yes (assuming there is no OS/App licensing concern and VM memory is less than a pNUMA node), or this will contradict with what you mentioned in the article (extracted below).“The easiest way to achieve this was to leave Cores per Socket at the default of 1 which present the vCPU count as Sockets without configuring any virtual cores. Using this configuration, ESXi would automatically generate and present the optimal vNUMA topology to the virtual machine.”Please clarify. Gmanyou picked up on this, good for you.

This is the kind of thing I’ve been seeing with VMware write ups for years, and with VMware’s documentation being written by god knows who you have no where to turn to at times. I am very happy to see newer blogs addressing these concerns.

What I still see missing is that VMware bloggers often put together a good look at things without actually spelling out the action items needed, and when they are written without all the details needed, it still leaves admins guessing what to do. This is a good article in that, although confusing at first because of the change in recommendation, it provides the action items for the admins.That being said, you log into vCenter 6.5, and you add CPU to a new VM with the drop down, it auto default to X # of sockets with 1 core per socket, exactly the opposite of what this article is now stating. So am I to believe that VMware is just now figuring this out about its own product? Perhaps its because the all the guys who created this great stuff have left the organization without leaving a good trail of internal documentation? But I am glad that I came across this post.

Jason TaylorThanks for this article. I am somewhat confused as I thought 1 core per socket was the recommended configuration. The 2013 article seems to imply that was the best performance, but the table above seems to say the opposite, to increase the cores per socket.I have a Physical Server with 2 Sockets, 8 Cores per socket, so 32 logical processors. There is 512GB of RAM in this host. This host is ESXi 5.5. I have a VM with 14 vCPU and it is set at 14 sockets with 1 core per socket.

It has 256GB of Memory. This server runs SQL 2012 Enterprise. Should it be set to 2 Sockets with 7 cores per socket? NuruddinMark, Thanks for the wonderful article.Following is the query that we have and want to clarify:We’ve got 1 host with 8 Physical Cores and after hyper threading it shows 16 Physical coresTechnically we can assign 16 cores to the VM but the article says you should not allocate more than available physical cores to a VM meaning we should allocate only 8 Cores.Question to you is what is the implication if we allocate more vCPU cores than the Physical Cores available on the physical host?ThanksNur.

Mark Achtemichuk Post authorWhen you start to build virtual machines larger than the pCore count (which you can but I consider that an ‘advanced’ activity and you need to keep consolidation rations low), you place yourself in the situation in which you can generate contention quickly and make the performance worse than if you sized it less than or equal to pCore count.Remember Hyper-threading offers value by keeping the pCore as busy as possible using two threads. If one thread is saturating the pCore, there’s little value in using a second thread.So assuming your VM can keep 8 vCPU’s busy, then increasing the vCPU count doesn’t mean your VM will get more clock cycles. In fact it may now create scheduling contention and overall throughout is reduced.You can build VMs larger than pCore count when your applications value parallelization over utilization. For example, if you know the application values threads, but utilization of each thread never exceeds 50%, then 16 threads @ 50% = 8 pCore saturation. RekhaHi Mark,Thanks for the great writeup. Its clear not to enable hot add as its disables vNUMA.1. Is it applicable for small VMs with 4 vCPUs and 4 GB RAM too?

If hot add is enabled, can i configure this VM with 4 sockets and 1 core and VMware will take care of allocating resources locally? Or should I still go with 1 socket and 4 cores?2. For larger VMs – have a host with 2 sockets, 14 cores and 256 GB RAM, (128 GB Memory in one pNUMA).Two scenarios here.a)VM with 14 vCPUs and 32 GB Memory. So is it better to configure 1 socket 14 cores (as Memory is still local)?b) VM with 16 CPUs and 32 GB Memory.

Configure 2 sockets and 8 cores.Pls clarify. Andy CHi Mark, great article which is helping me at the moment trying to get deployment of VMs to be right-sized.

These articles are good to point people too.One thing that did occur to me and that is the Cluster On Die feature available on some processors. I’m expecting it won’t make any difference and vNUMA will treat it the same as ‘any other’ NUMA presentation.Is it just a matter that the CoD feature just increases the available NUMA nodes?So, say, a dual socket, 14 core host.

With 256GB RAM. CoD feature enabled.Meaning there are 4 NUMA nodes consisting of 7 cores each & 64GB local mem each.How would the ‘Optimal config’ table look in that situation? Would 3 vNUMA nodes just be presented to a VM that requires 15 vCPUs (configured with 3 sockets, 5 cores) for example?Also, in the scenario above with the VM requiring 15 vCPUs (3 vNUMA nodes) would the optimal memory for the VM in this scenario be (up to) 196GB?Thanks again for the write up.Andy.

Mark Achtemichuk Post authorThat’s correct – using CoD will split the socket into two logical pNUMA nodes using a second home agent.So CoD ‘off’ means each socket is a single pNUMA node (with all 14 pCores associated with it) with 128GB RAM.CoD ‘on’ means that same socket now appears as two pNUMA nodes (each with 7 pCores associated with it) with 64GB RAM each.The optimal configuration would be to use the fewest number of pNUMA nodes.If you needed 15 vCPU’s, one more than could fit into two pNUMA nodes, the optimal presentation would be 3×5. If each pNUMA node had 64GB RAM, then with 3×5 you could access up to 192GB RAM before requiring a 4th pNUMA node – which incidentally I’d reconfigure VM to 16 vCPU and present as 4×4.

Naga CherukupalleMark,Is this applicable in vSphere 6.0 Also? Because the performance best practices document in 6.0 suggests to use 1 core and many virtual sockets. For example in my Current vsphere environment I have a vm with 20vCPU ( configured as Number of Virtual sockets =20 and number of cores per socket = 1) on Physical hosts with 28 Physical cores( 2 socket and 4 core per socket).

So Per your blog, can I reconfigure the vm as 20vCPU( Number of Vrtual Socket =2 and Number of Cores per socket =10) in order to get vNUma Benifits. Please advise as our environment is completely sized with More Number of Virtual sockets to 1 Core per socket in vms. Mark Achtemichuk Post authorIf the server only has 2 sockets with 10 cores per socket, plus Hyper-threads (so 40 logical processors), I’d be cautious creating a single VM larger than 2×10 (see rule of thumb #6).The reason for the conservative recommendation is that “if” you saturate 20 pCores with a single VM, then the Hyper-threads offer little value. So anything larger than 20 vCPU’s may suffer, unless the VM/App values parallelization and the vCPU’s don’t all run at 100%. Then you could over-provision the pCore count for a single VM. But watch contention closely – especially Co-Stop!So in the case of over-provisioning pCore count, yes 2×12 would be the right configuration – but – watch contention closely.

Jon GreerHello, I have a R510 server with (2) 6 core processors., in esxi 6.5 the cpu selection has changed. It has a pull down for “CPU” 1-12 (total cores available) and then “Cores per Socket” this puldown is the CPU count or lower. Next to that says “Sockets:”If I put 2 CPUs and 2 ‘Cores per Socket’ it says Sockets: 1If I put 2 CPU’s and 1 ‘Cores per Socket’ is says Sockets: 2I can not find an example of what this means all of the examples just say something like pick 1 cpu and 8 cores, not sure how to do that in 6.5If you were to install 2 winodws server 2016 one of them running SQL server 2016, VMs on this box, what would you set the CPU/cores per socket for?Thanks for any clarification. Noah EngelberthI’m confused by two of the statements in this article: Essentially, the vNUMA presentation under vSphere 6.5 is no longer controlled by the Cores per Socket value. VSphere will now always present the optimal vNUMA topology unless you use advanced settings.

When a vNUMA topology is calculated, it only considers the compute dimension. It does not take into account the amount of memory configured to the virtual machine, or the amount of memory available within each pNUMA node when a topology is calculated. So, this needs to be accounted for manually.So, in vSphere 6.5, how are we best to ensure that high memory but relatively low vCPU workloads are using a vNUMA topology that matches the physical topology?I have multiple SQL guests that are allocated 8 vCPU and 128GB of RAM.

My physical hosts are 20 core x 2 sockets (40 cores total) with 512GB of RAM. How do I ensure that if I have 3 or more SQL guests active on the same host, they’re not running into memory/performance problems from spanning NUMA nodes? My assumption is that under normal operation and just letting VMWare do it’s thing, I’d wind up with:– All three guests with 1 vNUMA node, 8 cores, 128GB of RAMPhysical allocations: the host is using some RAM out of each pNUMA bank.

Guest 1 allocated to pNUMA bank 1, is fine. Guest 2 allocated to pNUMA bank 2, is fine. Guest 3 comes along, there isn’t enough RAM available in either pNUMA node to fully satisfy the 128GB request, so guest 3 gets its memory spanned across NUMA nodes despite a 1 vNUMA node configuration. Mark Achtemichuk Post authorYou are correct.If each VM is 1×8 with 128GB RAM, then the ESXi schedulers will put two VM’s on Socket0 and one VM on Socket1. You’re are also correct in the fact that available memory per physical NUMA node is slightly less than 256GB since the hypervisor uses some (let’s call it 2-4%) so the guest OS on the second VM on Socket0 will still only see 1 vNUMA node, but in fact will be accessing a small amount of memory from a different pNUMA node (less than 10GB).So should we worry about this? In my experience – No.

It would be rare to see it negatively affect database KPI’s since it’s a small amount of memory and still very low latency. DGMark, Thanks for you great insights.

I’m analyzing a potential performance issue and would like to hear your thoughts.Physical server is AMD Opteron(tm) Processor 6378.CPU Packages: 2CPU Cores: 32CPU Threads: 32I believe these are 8 cores per socket with HT to give 16 logical processor per socket. NUMA Node count is 4, so each 4 pNUMA size is 8 cores with 127GB.The guest VM is allocated 16vCPUs and 64GB. Numactl shows single vNUMA but I feel it could be 2 pNUMA nodes.

It is possible that the non-local memory access is involved and application is suffering from performance issues. Vmstat and iostat on guest VM do not indicate any bottleneck but the application is suffering from long JAVA GC pauses.Currently don’t have access to host physical server. Requested for NUMA stats from esxitop.Meanwhile appreciate your thoughts.

Mark Achtemichuk Post authorThe AMD Opteron 6378 is a multi-chip module based on Piledriver architecture. AMD doesn’t use Hyper-Threading which is an Intel only SMT technology. Great write up, but the one thing I have found with testing different configurations is this, it makes no difference how many sockets you provision vs cores, or vice versa, vNUMA always does what it wants by default, which is to divide up the vCPUs across the underlying NUMA nodes of your host soon as you go past 8 vCPUs and surpass the # of cores in the NUMA socket. Even if I have a quad socket server, and I exceed 8 vCPUs and exceed the core count in a single numa node, if I provision this VM with 4 sockets it will still not show 4 NUMA nodes in the guest OS, but just 2. The cores vs sockets thing means absolutely nothing other than licensing issues with certain product. The only time it goes past 2 NUMA nodes in the guest OS is when your vCPU allocation exceed the # of CPUs in 2 of the NUMA nodes.

And it always seems to divide evenly across the NUMA nodes that it presents to the guest OS. So, based on this, the recommendation to allocate VMs in a core per socket fashion is irrelevant no matter how many vCPUs you need to allocate. VMware vNUMA doesn’t present NUMA nodes based on how many sockets you provision when allocating vCPU.

TEST it out folks and you will see.Bottom line, vNUMA does its own thing regardless of what you want do. You would need to enable the advanced parameters to do what you want to do.

But I wouldn’t, VMware has set this up really nicely. Also, another take away, just keep your VM vCPU allocations under the 3# of cores in your NUMA node, otherwise, in a highly shared environment, not only do you introduce remote memory access in NUMA, but your CPU ready times will go up and you might not get any gains with additional CPU at all. Depending of course.

Need to correct my statement above, after further review and testing in v6.5, the statement “it makes no difference how many sockets you provision vs cores, or vice versa, vNUMA always does what it wants by default” is incorrect. Please disregard this statement.You do have control over cores per socket when vNUMA becomes enabled, and once it is, if you deploy a VM with 4 virtual sockets you will see those 4 sockets presented to the Guest OS as NUMA nodes. What I do see is if you deploy a VM with more virtual sockets than the # of NUMA nodes in the host, vNUMA does some math and divides up the # virtual sockets to an optimal # and presents it. On a quad socket server, I tested changing the # of virtual sockets leaving the same # of vCPUs for each test, and the result was that sometimes I would end up with 3 NUMA nodes presented, and sometimes 4, etc. So it does make sense to deploy cores per socket and let VMware handle the NUMA presentation to the Guest. FOHi Mark,the new XEON Gold and Platinum Processors can only address 768 GB RAM per Socket. If you want 1,5TB RAM per Socket you have tobuy a M variant.

Assume i have a server with for example 2 sockets, each socket 22 cores and 768 GB RAM per Socket(a total of 1,5 TB per server).As long as I configure my VMs with. Alain MaynezHello Mark,Standardization is our day to day practice. All of our ESXi hoss are dual Socket with different core-count and memory configurations, they go from 8, 10,14 Cores/Socket and memory from 96, 128, 256 and 384 GB. We currently still run Vsphere 6.x. We have in our environment VMs that are configured with more memory than a vNuma handles but also small VMs where memory is equal or smaller than vNUMA.On this scenario if we want to standardize across the board and knowing that all hosts are 2 Sockets would the SECOND TABLE be the way to go even if some of our VMS have equal or smaller Mem config than the vNUMA node?

Is there a performance impact by doing this?Thanks. Aviv CohenHello ThereI have few questions regarding our SAP Systems on VM, First this is our landscape:VMWare 6.5 / 5 ESXi 6.5 Hosts (IBM Flex System x240 / Intel(R) Xeon(R) CPU E5-2650 0 @ 2.00GHz)Each Server Memory is Total 191.97 GB / CPU are:Model Intel(R) Xeon(R) CPU E5-2650 0 @ 2.00GHz / Processor speed 2 GHz / Processor sockets 2 / Processor cores per socket 8 / Logical processors 32 / Hyperthreading Enabled / 2 NUMA nodes 96 RAM Size.Our SAP systems are all VM’sQuestions:1. If my SAP system (Included Oracle Database) required 32 vCPU / 92GB RAM, what is the best configuration 1S X 32Cores / 2S X 16Cores / 4S X 8Cores / 8S X 4Cores?2. Shall big SAP systems (Same as question one) should be the only VM on ESXi host?3. How it’s passable to configured a single VM 4 Sockets if the ESXi host only have 2 Sockets?

Or how it’s passable to give to VM 1 Socket with 12 Cores per socket.Question about NUMA:1. NUMA is the Socket + System Bus RAM on ESXi host?ThanksAviv Cohen.

BCT-TechHi Mark, thanks for the great article.We are looking to optimize the CPU performance of a single VM (no other VMs on the Host).What would be the optimal CPU configuration for a single VM on this Host?ESXi 5.5 U32 CPU Sockets8 Cores EachHyperthreading enabled32 Logical Processors128 GB RamVM currently configured for 32 GB Ram (can bump up to 128 since no other VMs)VM currently configured for 4 CPU Sockets, 4 Cores per SocketWhat about Hyperthreading enabled vs disabled in this scenario?We are open to reducing vCPU to a single NUMA node if it will improve overall performance. Real-time CPU usage is averaging about 10% on the VM. JerryHI mark!Suppose a DELL R815. Dell opteron 6300 series. 32 cores total.

256 GB of RAM.4 sockets - 8 cores per socket - VMware sees 8 numa nodes!, so 64 GB per numa node.So, you have 2 numa inside 1 socket!Mistery: How many sockets and cores should i set on a VM that requires:– 10 cores and 64 GB ram– 10 cores and 128 GB ram– 10 cores and 192 GB ramSockets have 8 cores, but numa separates/takes into consideration, also THE cores?So 1 numa = 4 cores/64 GB of RAM? Or this is an incorrect approach?Thanks! BkchsHello,This example mentioned in the article is confusing to me:“Example:An ESXi host has 2 pSockets, each with 10 Cores per Socket, and has 128GB RAM per pNUMA node, totalling 256GB per host.If you create a virtual machine with 128GB of RAM and 1 Socket x 8 Cores per Socket, vSphere will create a single vNUMA node. The virtual machine will fit into a single pNUMA node.If you create a virtual machine with 192GB RAM and 1 Socket x 8 Cores per Socket, vSphere will still only create a single vNUMA node even though the requirements of the virtual machine will cross 2 pNUMA nodes resulting in remote memory access. This is because only the compute dimension in considered.The optimal configuration for this virtual machine would be 2 Sockets x 4 Cores per Socket, for which vSphere will create 2 vNUMA nodes and distribute 96GB of RAM to each of them.”Is this correct???As of vSphere 6.5, changing the ‘cores per socket’ value no longer influences vNUMA or the configuration of the vNUMA topology. AlanLooking for advice on below question.We use NVIDA Grid and up to now a ESX server with 2 16 Core Processors and 512GB ram we have say 4 VM’s running with 8VCPU and 128GB ram each.Nice and easy no problem but what I would really like to do is the following give each VM 28VCPU and 128GB Ram.The reason for this is that each VM is not fully loaded CPU ways all the time and there is a task that the workstation dues that really needs more cores when that task is running.So my idea is when a VM needs the CPU it gets it. I reliase that if all four VM,s were to run the task that needs more cores all at the same time then they would just share the CPU.Is this a bad idea just looks like a waste to have dedicated resources applied to a VM that can’t be used by another when not bussy.

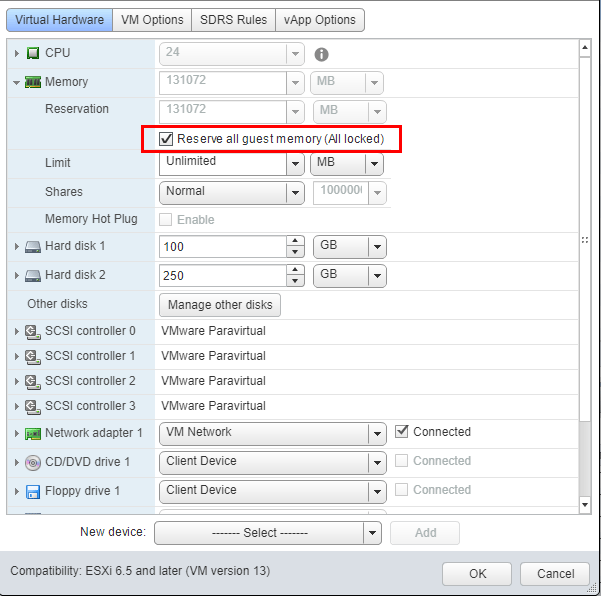

28363 viewsSome changes are made in ESXi 6.5 with regards to sizing and configuration of the virtual NUMA topology of a VM. A big step forward in improving performance is the decoupling of Cores per Socket setting from the virtual NUMA topology sizing.Understanding elemental behavior is crucial for building a stable, consistent and proper performing infrastructure. If you are using VMs with a non-default Cores per Socket setting and planning to upgrade to ESXi 6.5, please read this article, as you might want to set a Host advanced settings before migrating VMs between ESXi hosts.

More details about this setting is located at the end of the article, but let’s start by understanding how the CPU setting Cores per Socket impacts the sizing of the virtual NUMA topology.Cores per SocketBy default, a vCPU is a virtual CPU package that contains a single core and occupies a single socket. The setting Cores per Socket controls this behavior; by default, this setting is set to 1. Every time you add another vCPU to the VM another virtual CPU package is added, and as follows the socket count increases.Virtual NUMA ToplogySince vSphere 5.0 the VMkernel exposes a virtual NUMA topology, improving performance by facilitating NUMA information to guest operating systems and applications.